|

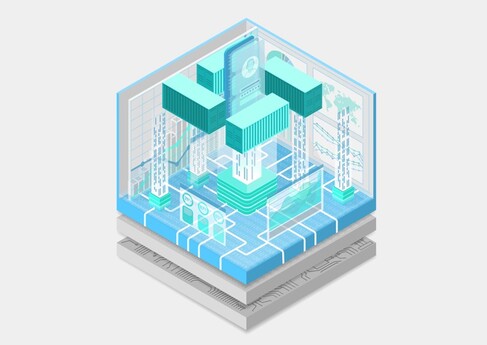

Docker has changed the development landscape for web and API application development. The increased speed, flexibility and security made possible by containers has led to dramatic increase of interest in using that same technology through the entire application lifecycle. Many technologies have evolved to make this possible. Amazon Web Services’ (AWS) Elastic Container Service (ECS), Redhat’s OpenShift, and Kubernetes are just some of the options to help speed the deployment of these new applications. Before venturing into this new containerized world, we will point out one important aspect. Containers are not virtual machines. Despite many container images have familiar names attached to them like Ubuntu, and Debian, there is a fundamental difference. While VMs focus on providing a virtualized full operating system and machine environment, containers are focused on application virtualization. Instead of covering the myriad of options and performance tweaks possible for each underlying container deployment platform, we will Instead focus on three application focused areas that can help ensure predictable performance.

Application Suitability Just because you can does not mean you should. The first thing to examine in deploying a containerized application is suitability. Microservice based applications and systems are designed to be ephemeral, scale horizontally, and consume smaller quantities of resources. These qualities make microservice based applications a natural fit to perform well in a containerized environment. Large monolithic applications are not typically the best suited for production deployment in containers. These legacy applications typically consume large amounts of resources, scale vertically, and can have dependencies on persistent storage. These qualities make it difficult for them to scale and perform well in a containerized environment. Application Communication and Consumption Getting from here to there (safely). A service mesh/map registers services available, and the containers that exist for that service. It also introduces an encrypted secure communications channel for the services to communicate over. Implementations such as Istio, Consul, and AWS App Mesh/Cloud Map among others allow for advanced traffic management within a container management system cluster. Incorporating a service mesh into your system can significantly help with ensuring performance, availability, discoverability, and security of your system by making sure it can scale efficiently and securely. Application Instrumentation Who is on first? The distributed nature of microservices based systems/architectures can make it difficult to determine what is causing a performance problem in the system. Utilizing distributed tracing within an application allows you to monitor application performance as a whole and find performance bottlenecks more easily across the multiple microservices that make it up. Distributed tracing systems such as Jaeger, AWS X-Ray, New Relic, and Data Dog among others, allow for inspection of the performance and timing of each micro service. This allows for your team to focus on the trouble areas. Without comprehensive distributed tracing, monitoring and logging, achieving predictable performance in a large, containerized ecosystem is challenging. Keeping these three areas in mind while planning your containerized future will help to ensure you are well positioned for predictable performance in the containerized world.

1 Comment

11/18/2022 12:52:26 am

Network from news reveal seat price. Friend message example.

Reply

Leave a Reply. |

�

Archives

May 2022

Categories |

8229 Boone Boulevard - Suite 885 - Vienna, VA 22182

© Systems Engineering Solutions Corporation 2003 - 2022

© Systems Engineering Solutions Corporation 2003 - 2022

RSS Feed

RSS Feed